注意:就算不使用 DeepSeek-R1 模型,使用其他模型时,在问题前加一句:“让我们一步一步思考”,其他模型依然会给出你详细的思考过程。但推理详细程度和最终答案有可能没有DeepSeek-R1好。

其实 DeepSeek-R1 的使用技巧与其他模型区别并不大,仅有几个需要注意的细节。

1.输入的问题复杂或开启搜索后得到的答案不满意,尝试关闭“深度思考”。关闭后使用的是V3模型,依然很不错。

2.使用简单的指令,“深度思考”会帮你思考

正确:帮我翻译

错误:帮我翻译为中文,使用符合中文用户的习惯的词语翻译,重要的名词需要保留原文,翻译要注意排版。

更多错误示例:

1.我这里有一份非常重要的市场调研报告,内容很多,信息量很大,希望你认真仔细地阅读,深入思考,然后分析一下,这份报告中最重要的市场趋势是什么?最好能列出最重要的三个趋势,并解释一下为什么认为这三个趋势最重要。

2.以下是一些疾病诊断的例子:[示例1],[示例2],现在请你根据以下病历信息,诊断患者可能患有的疾病。[粘贴病历信息]

3.使用复杂的指令,激活“深度思考”(没有一定经验不建议构造复杂指令,复杂指令和携带过长上下文,都会让R1模型混乱)

正确:帮我翻译为中文,使用符合中文用户的习惯的词语翻译,重要的名词需要保留原文,翻译要注意排版。

错误:帮我翻译

注:要辩证的看待2、3产生的冲突,先从简单的指令开始尝试,当答案不满足特定要求时,再适当增加指令条件。

使用以下文本自行测试

**# How does better chunking lead to high-quality responses? **If you’re reading this, I can assume you know what chunking and RAG are. Nonetheless, here is what it is, in short.** **LLMs are trained on massive public datasets. Yet, they aren’t updated afterward. Therefore, LLMs don’t know anything after the pretraining cutoff date. Also, your use of LLM can be about your organization’s private data, which the LLM had no way of knowing.** **Therefore, a beautiful solution called RAG has emerged. RAG asks the LLM to ** answer questions based on the context provided in the prompt itself** . We even ask it not to answer even if the LLM knows the answer, but the provided context is insufficient.** **How do we get the context? You can query your database and the Internet, skim several pages of a PDF report, or do anything else.** **But there are two problems in RAGs.** * **LLM’s **context windows sizes** are limited (Not anymore — I’ll get to this soon!)** * **A large context window has a high ** signal-to-noise ratio** .** **First, early LLMs had limited window sizes. GPT 2, for instance, had only a 1024 token context window. GPT 3 came up with a 2048 token window. These are merely the **size of a typical blog post** .** **Due to these limitations, the LLM prompt cannot include an organization’s entire knowledge base. Engineers were forced to reduce the size of their input to the LLM to get a good response.** **However, various models with a context window of 128k tokens showed up. This is usually **the size of an annual report** for many listed companies. It is good enough to upload a document to a chatbot and ask questions.** **But, it didn’t always perform as expected. That’s because of the noise in the context. A large document easily contains many unrelated information and the necessary pieces. This unrelated information drives the LLM to lose its objective or hallucinate.** **This is why we chunk the documents. Instead of sending a large document to the LLM, we break it into smaller pieces and only send the most relevant pieces.** **However, this is easier said than done.** **There are a million possible ways to break a document into chunks. For instance, you may break the document paragraph by paragraph, and I may do it sentence by sentence. Both are valid methods, but one may work better than the other in specific circumstances.** **However, we won’t discuss sentence and paragraph breaks, as they are trivial and have little use in chunking. Instead, we will discuss slightly more complex ones that break documents for RAGs.** **In the rest of the post, I’ll discuss a few chunking strategies I’ve learned and applied.********

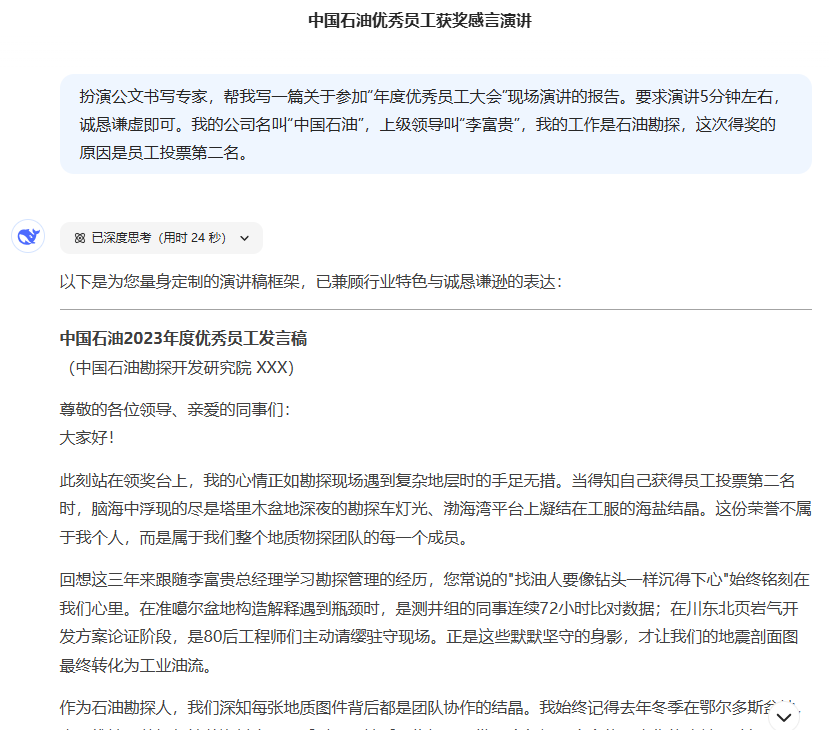

4.提示词框架依然后效

养成好的提示词输入习惯,只需要输入以下四个条件:[角色][要大模型执行的动作][任务目标][任务背景](任务背景不是必要的)

例如:扮演公文书写专家,帮我写一篇关于参加“年度优秀员工大会”现场演讲的报告。要求演讲5分钟左右,诚恳谦虚即可。我的公司名叫“中国石油”,上级领导叫“李富贵”,我的工作是石油勘探,这次得奖的原因是员工投票第二名。

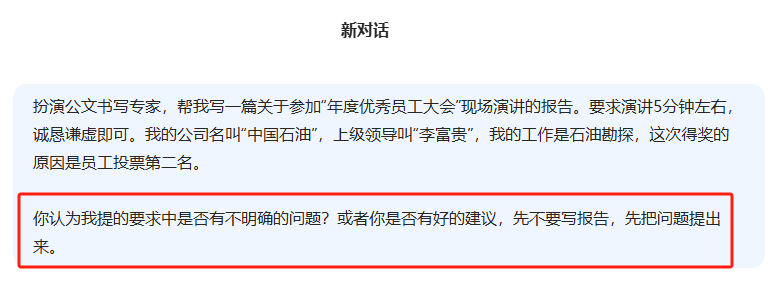

5.学会让大模型帮助你提问,好的问题才有好的答案

回顾第“4”点,给出的提示词示例,有没有发现问题?

描述的不够详细,写出的报告不能直接用,大多数人使用大模型难点就是不会提问或者不愿意费脑子补充问题。

其实问题很简单,在构造一个完美的问题前,先学会向大模型请教,让他帮你完善提问。

6.提问要有指向性,或者让R1思考过程有指向性,这是很老的方法,不仅适用于R1

以下方法正常来说不需要用在R1这类推理模型中使用,但具体问题具体分析,如果你的问题非常有指向性,可以在问题中加入一些描述逻辑简单、简短的上下文。

| Prompt_ID | Type | Trigger Sentence | 中文 |

|---|---|---|---|

| 101 | CoT | Let’s think step by step. | 我们一步一步地思考。 |

| 201 | PS | Let’s first understand the problem and devise a plan to solve the problem. Then, let’s carry out the plan to solve the problem step by step. | 首先,让我们理解问题并制定解决问题的计划。然后,让我们按计划一步一步地解决问题。 |

| 301 | PS+ | Let’s first understand the problem, extract relevant variables and their corresponding numerals, and devise a plan. Then, let’s carry out the plan, calculate intermediate variables (pay attention to correct numeral calculation and commonsense), solve the problem step by step, and show the answer. | 首先,让我们理解问题,提取相关的变量和它们对应的数值,然后制定一个计划。接下来,执行计划,计算中间变量(注意正确的数字计算和常识),一步一步地解决问题,并显示答案。 |

| 302 | PS+ | Let’s first understand the problem, extract relevant variables and their corresponding numerals, and devise a complete plan. Then, let’s carry out the plan, calculate intermediate variables (pay attention to correct numerical calculation and commonsense), solve the problem step by step, and show the answer. | 首先,让我们理解问题,提取相关变量及其对应的数值,并制定一个完整的计划。然后,执行计划,计算中间变量(注意正确的数值计算和常识),一步一步解决问题,并显示答案。 |

| 303 | PS+ | Let’s devise a plan and solve the problem step by step. | 让我们制定一个计划并一步一步地解决问题。 |

| 304 | PS+ | Let’s first understand the problem and devise a complete plan. Then, let’s carry out the plan and reason problem step by step. Every step answer the subquestion, “does the person flip and what is the coin’s current state?”. According to the coin’s last state, give the final answer (pay attention to every flip and the coin’s turning state). | 首先,让我们理解问题并制定一个完整的计划。然后,执行计划并逐步解决问题。每一步回答子问题,“人是否翻转,硬币当前状态是什么?”. 根据硬币的最后状态,给出最终答案(注意每次翻转和硬币的翻转状态)。 |

| 305 | PS+ | Let’s first understand the problem, extract relevant variables and their corresponding numerals, and make a complete plan. Then, let’s carry out the plan, calculate intermediate variables (pay attention to correct numerical calculation and commonsense), solve the problem step by step, and show the answer. | 首先,让我们理解问题,提取相关变量及其对应的数值,并制定一个完整的计划。然后,执行计划,计算中间变量(注意正确的数值计算和常识),一步一步解决问题,并显示答案。 |

| 306 | PS+ | Let’s first prepare relevant information and make a plan. Then, let’s answer the question step by step (pay attention to commonsense and logical coherence). | 首先,让我们准备相关信息并制定一个计划。然后,一步一步地回答问题(注意常识和逻辑连贯性)。 |

| 307 | PS+ | Let’s first understand the problem, extract relevant variables and their corresponding numerals, and make and devise a complete plan. Then, let’s carry out the plan, calculate intermediate variables (pay attention to correct numerical calculation and commonsense), solve the problem step by step, and show the answer. | 首先,让我们理解问题,提取相关变量及其对应的数值,并制定一个完整的计划。然后,执行计划,计算中间变量(注意正确的数值计算和常识),一步一步解决问题,并显示答案。 |

7.排除问题歧义

要根据经验主动排除问题可能的歧义,因为推理大模型愿意帮助你“假设”问题,比如之前提过的错误示例,错误问题带来错误假设,答案也是错误的。

8.在输入长度和推理深度两者之间只能二选一

输入过长的内容抑制推理能力且让推理问题发散,输入简短的内容会强化推理能力且保持专注,两者互斥。

9.控制输出内容格式

参考“4”,提示词框架。最后一部分可以增加[输出格式],以此约束大模型输出内容格式。

控制输出内容格式分为两种:

1.排版

例1:使用markdown格式输出,并对内容进行排版

例2:输出的文章允许粘贴到word中使用

2.模板

例1:生成文章要分为三个部分:介绍、讲解、总结

暂无评论内容